Cognition Without Brains: How Memory Emerges in Polymers, Cells, and Spacetime

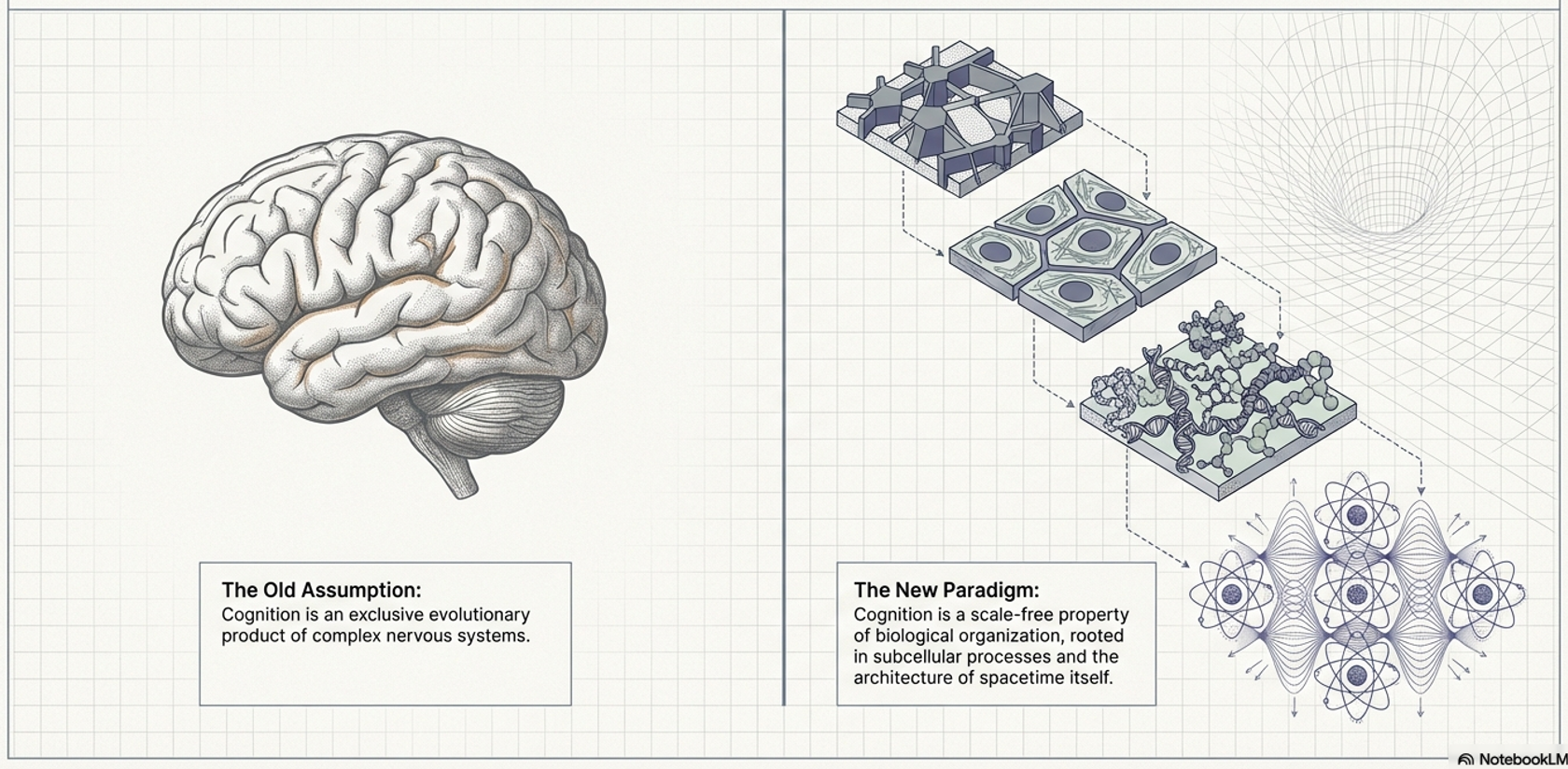

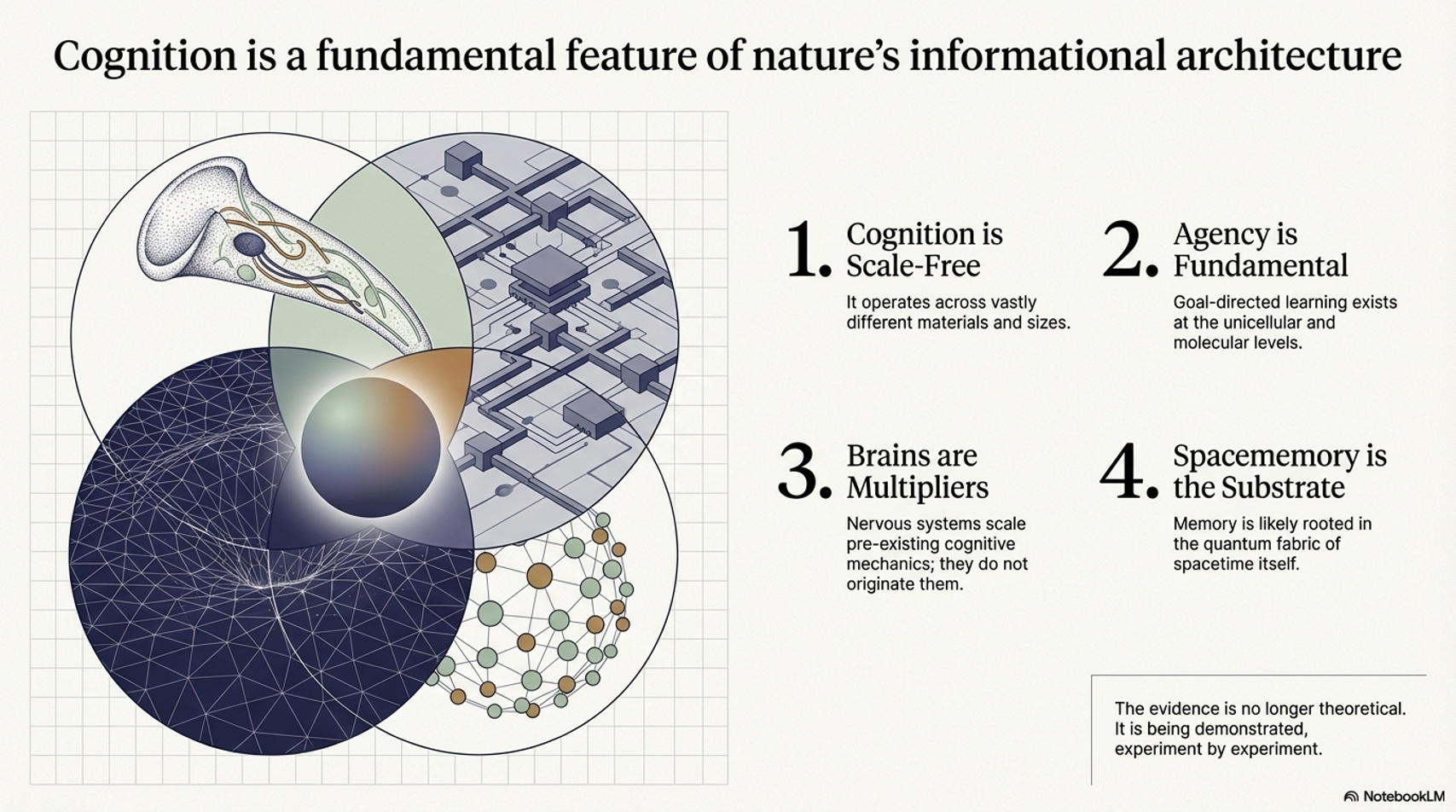

The Expanding Evidence that Cognition is a Scale-Free Property of Nature

In a previous article, we explored how the trumpet-shaped protist Stentor roeseli can systematically alter its behavioral responses to repeated stimuli — escalating through a hierarchy of avoidance strategies as if “changing its mind” — despite possessing no neurons, no synapses, and no brain.

Stentor responding to external stimulus, causing it to retract

That article examined how unicellular organisms from Physarum slime molds to bacteria demonstrate genuine memory-like behaviors, and argued that these findings support the idea that cognitive capacities are not late evolutionary innovations exclusive to complex nervous systems, but rather fundamental, scale-free properties of biological organization — properties that may be rooted in subcellular quantum processes and the architecture of spacetime itself.

Since that article was published, developments have been accelerating — and the evidence is now even more compelling.

Pavlovian Conditioning Without a Single Neuron

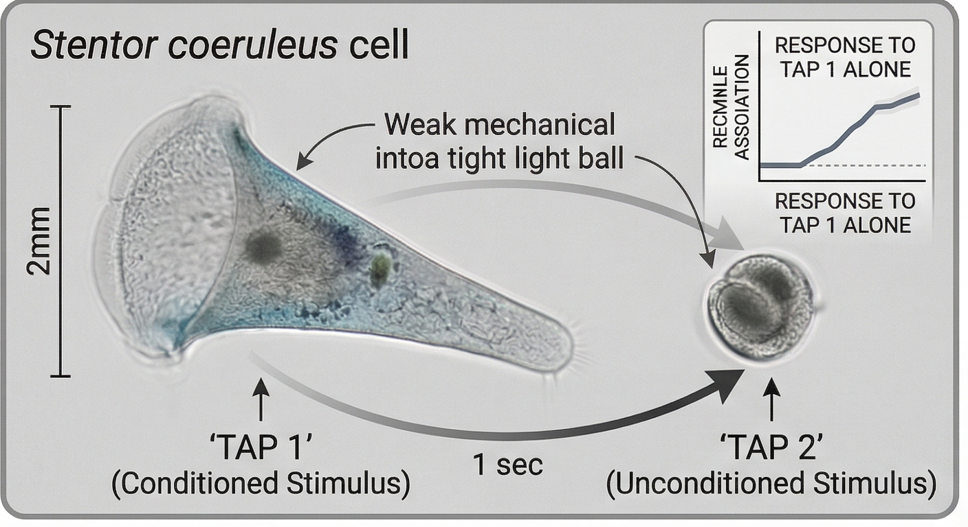

Perhaps the most striking recent advance comes from Sam Gershman’s group at Harvard. In a preprint published in February 2026, Gershman and colleagues report something that would have seemed extraordinary just a few years ago: associative learning — Pavlovian conditioning — in the single-celled ciliate Stentor coeruleus.

The experimental design was elegant. Stentor cells anchor themselves in a Petri dish and contract rapidly into a ball when disturbed by mechanical stimulation — a defensive response that costs them feeding time. The researchers introduced a weak tap (which alone rarely triggered contraction) paired one second before a stronger tap (which reliably did). After repeated pairings, the cells began showing enhanced contraction responses to the weak tap alone. Control experiments ruled out non-associative explanations such as sensitization or arousal, and parametric manipulation of the timing revealed a systematic dependence of learning on both inter-trial and inter-stimulus intervals.

In other words, a single-celled organism — with no nervous system whatsoever — was learning to predict that a weak stimulus signaled an impending stronger one, and adjusting its behavior accordingly. This is Pavlovian conditioning, the same fundamental form of associative learning that Pavlov demonstrated with his dogs, operating in a single cell roughly 2 millimeters long.

The researchers conclude that this capability suggests an ancient evolutionary origin for associative learning, one that preceded the emergence of multicellular nervous systems by hundreds of millions of years.

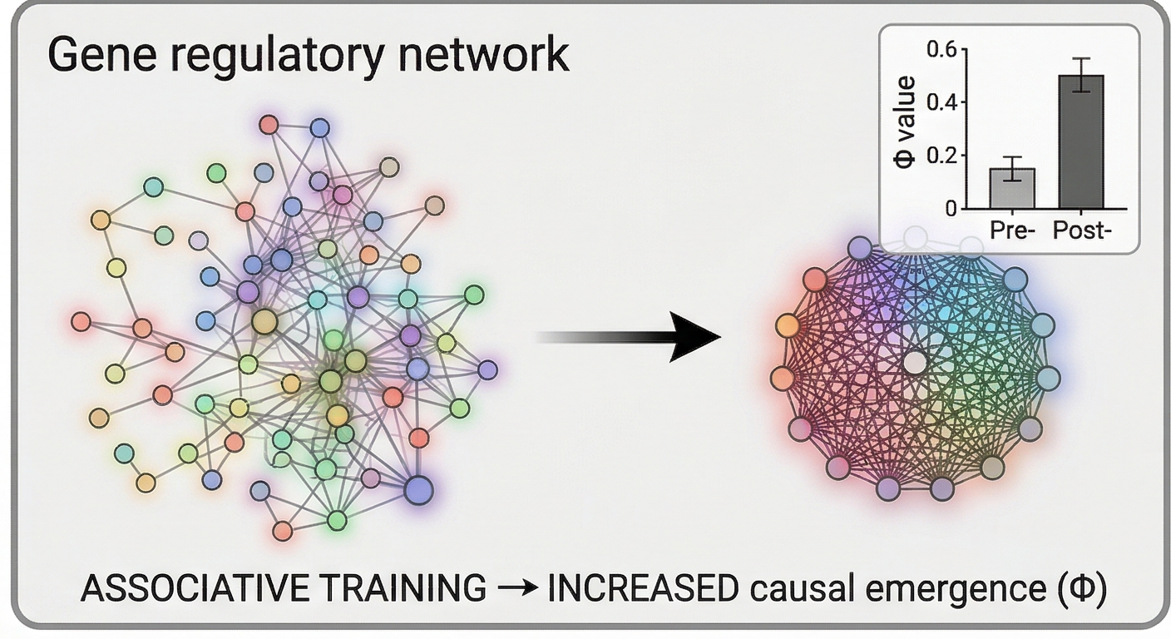

When Gene Networks Learn, Agents Emerge

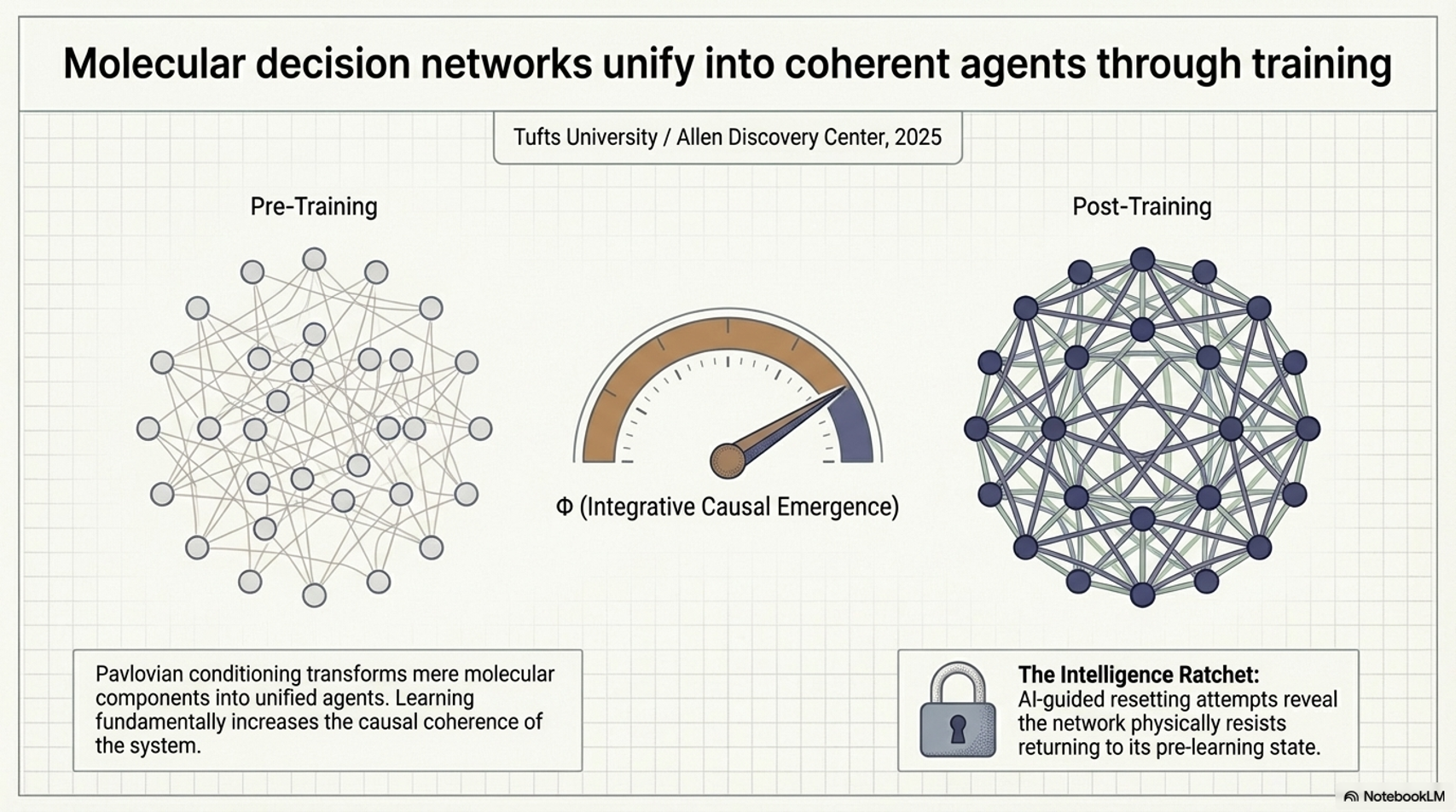

This finding dovetails powerfully with computational work from Michael Levin’s group at the Allen Discovery Center at Tufts University. As I discussed in a recent analysis, Levin’s team (Pigozzi, Goldstein & Levin, 2025) modeled 29 biological gene regulatory networks (GRNs) and subjected them to a Pavlovian-style associative conditioning protocol — essentially “training” these molecular-level decision networks to respond to paired stimuli. The results, published in Communications Biology, were remarkable: successfully conditioned networks showed increased integrative causal emergence (measured as higher Φ), meaning they became more unified and coherent in their causal structure after learning.

The implication is profound. Learning doesn’t just change a system’s input-output mapping — it increases the degree to which the system functions as an integrated, emergent whole. Training transforms a collection of molecular components into something that more closely resembles a coherent agent. In follow-up work, the team explored how AI could be used to “reset” learned behaviors in GRN models, revealing an “intelligence ratchet” dynamic where some acquired responses become progressively harder to erase — the system resists returning to its pre-learning state.

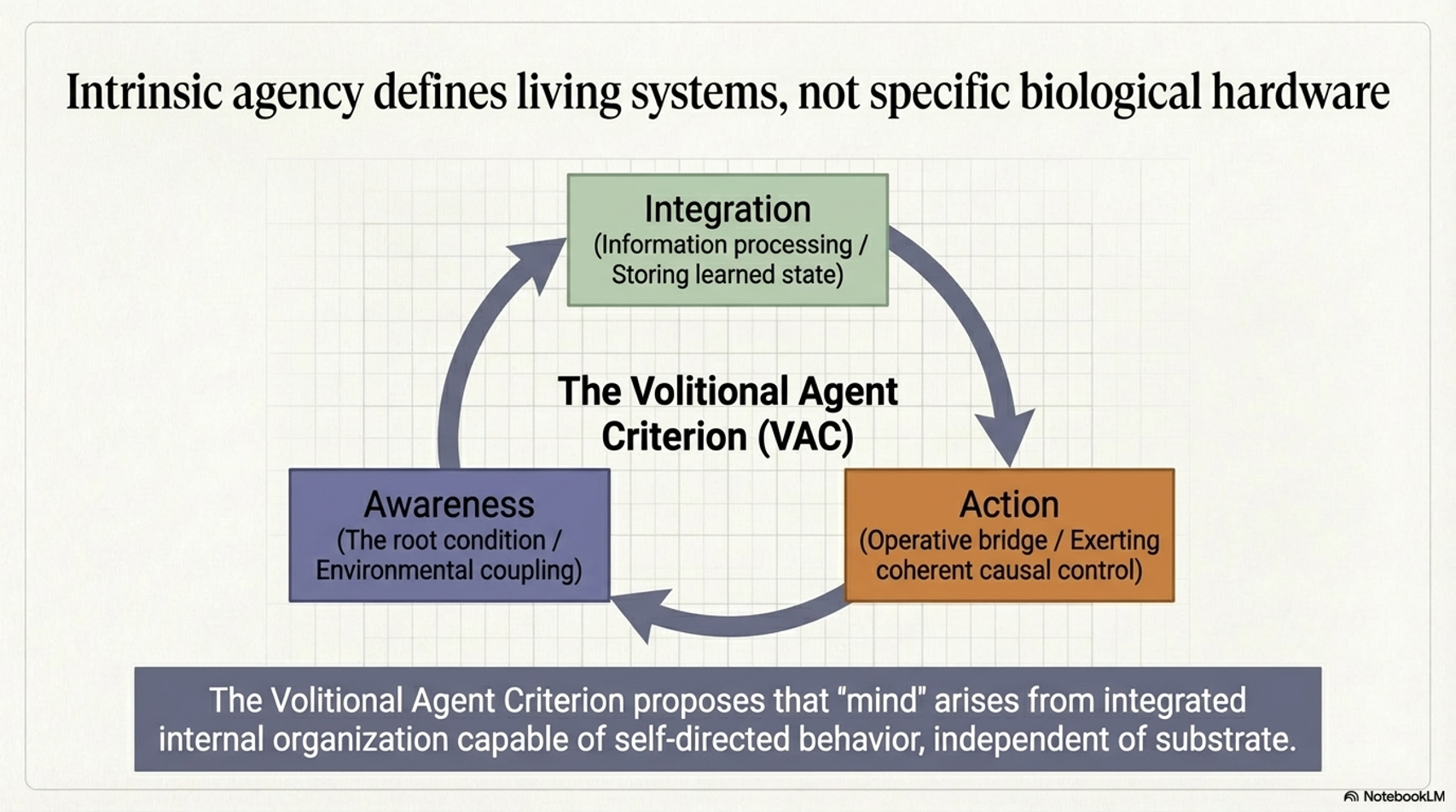

These results support a framework I have elaborated in the Volitional Agent Criterion (VAC): that agency and cognition are not mystical add-ons that appear only after brains evolve, but scale-free properties of sufficiently organized complex systems — systems that can integrate information, store learned state, and exert coherent causal control over their future behavior.

The VAC framework is deeply complementary to Michael Levin's TAME (Technological Approach to Mind Everywhere). Where TAME provides the theoretical scaffolding for why we should recognize goal-directedness across scales — arguing that mentalistic terminology, when applied with empirical rigor and fecundity, is scientifically productive — VAC provides the operational criteria for actually identifying and measuring whether a system qualifies as a volitional agent. TAME tells you to look for agency; VAC tells you how to detect it.

The Volitional Agent Criterion establishes four testable dimensions for assessing agency:

Information integration: Can the system receive, process, and retain information from its environment in ways that influence its internal state? This goes beyond mere reaction — it requires that inputs be synthesized into a coherent representation that persists beyond the stimulus itself.

Learned state storage: Does the system's behavior change based on experience in ways that persist over time? This is not genetic evolution or passive degradation, but active modification of response patterns based on feedback — the hallmark of memory at any scale.

Coherent causal control: Can the system exert goal-directed influence on its future states? Does it demonstrate error correction, homeostatic regulation, or problem-solving that adjusts means to achieve consistent ends across varying conditions?

Volitional capacity: Does the system demonstrate agency — the ability to choose among alternative strategies to achieve goals? This is the highest tier: not just responding or learning, but exhibiting flexibility in how goals are pursued.

These criteria operationalize TAME's more abstract concepts. When Levin speaks of "agents" and "goals," VAC provides the empirical checklist. When TAME argues for the fecundity of cognitive language, VAC specifies what experimental outcomes would justify that language. Together, they create a two-part methodology: TAME establishes the theoretical legitimacy of attributing cognition to non-neural systems; VAC provides the measurement framework to do so rigorously.

What Levin's group has shown computationally in gene regulatory networks, and what Gershman's group has now demonstrated empirically in living Stentor, is that these VAC criteria are satisfied at the molecular and unicellular level. These systems integrate information (sensory feedback modulates internal state), store learned states (conditioning produces persistent behavioral changes), exert coherent causal control (they solve problems through trial and error), and in the case of Stentor, demonstrate volitional capacity (choosing among four distinct behavioral strategies based on context).

Critically, these are not reductive mechanistic explanations that dissolve agency into mere chemistry. They are measurements of genuine emergence — the appearance of higher-order causal properties that cannot be predicted from component behavior alone. The increase in Φ (integrated information) observed in conditioned GRNs is not a metaphor. It is a quantitative signature that learning creates new causal structures at the system level. The VAC framework, paired with TAME, allows us to recognize and measure this emergence wherever it occurs.

From Pong to Doom: Neurons on a Chip

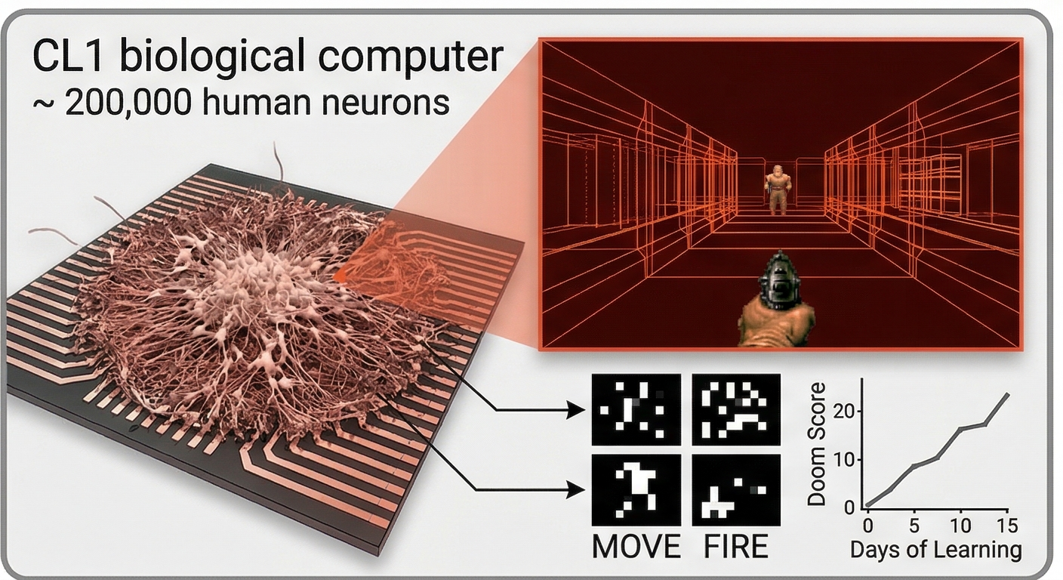

Against this backdrop, recent achievements in biocomputing take on deeper significance than the headlines suggest. In early March 2026, Australian biotech company Cortical Labs demonstrated that their CL1 biological computer — approximately 200,000 human neurons derived from induced pluripotent stem cells, grown on a high-density microelectrode array — had learned to play the classic first-person shooter Doom.

This builds on the company’s earlier DishBrain work, published in Neuron in 2022, where approximately 800,000 neurons learned to play Pong. That earlier achievement took 18 months. The Doom milestone was accomplished with a quarter of the neurons, in roughly a week, thanks to the CL1’s new interface that allows anyone to program biological neurons using Python. An independent developer, Sean Cole, accomplished the integration.

The jump from Pong to Doom is significant. Pong involves a direct, two-dimensional sensory-motor relationship: the ball moves, the paddle moves. Doom is a three-dimensional environment with exploration, enemies, combat, and real-time decision-making. The game’s visual information had to be translated into patterns of electrical stimulation delivered to the neural culture, and the neurons’ firing patterns were decoded back into in-game actions — moving, turning, and firing.

The neurons are not skilled players. As Cortical Labs’ Chief Scientific Officer Brett Kagan notes, the performance resembles a complete beginner who has never seen a computer — which, to be fair, the neurons haven’t. But they show evidence of adaptive, goal-directed learning: they can locate enemies, shoot, and navigate corridors, improving over time through a feedback-based reinforcement process. The cells gradually reorganize their activity in response to structured feedback — a form of learning that exploits the natural tendency of neurons toward synaptic plasticity, physically restructuring connections to minimize chaotic feedback and develop coherent response pathways.

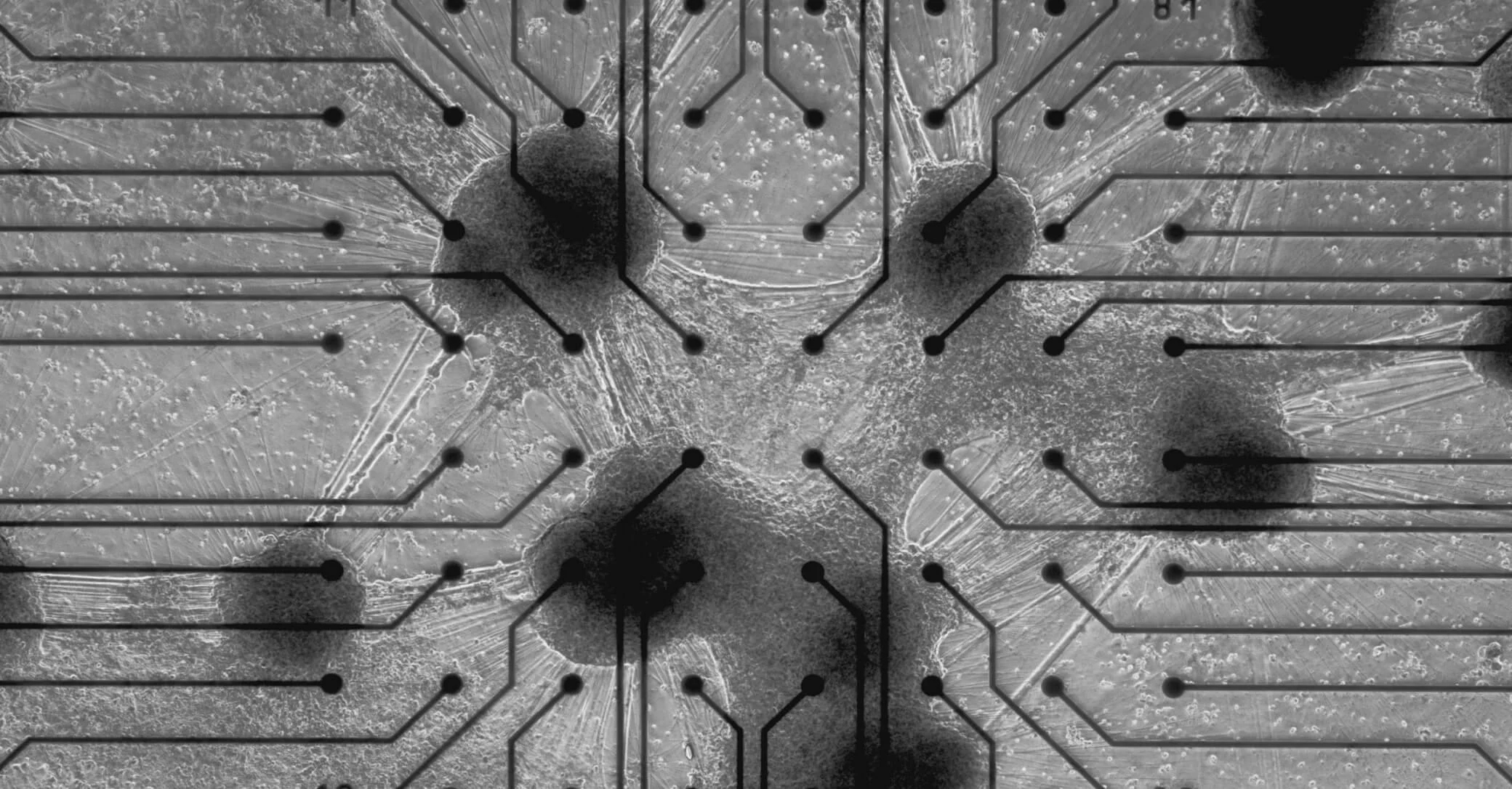

The company Cortical Labs is currently selling CL1 biosoftware licenses to researchers, opening the door to entirely new paradigms for studying embodied neural computation — the interaction of neural tissue with environmental feedback over time. With the CL1, researchers can buy a fully grown, ready-to-interface biological computer running 200,000 human neurons, program it with standard machine learning frameworks, and watch as it self-organizes its neural circuitry to solve problems. This is not a simulation. It is not neuron modeling software. It is actual living human neural tissue combined with silicon chips learning in real time.

Actual neurons fully integrated on the electronic circuits of a silicon chip

Our technology merges biology with traditional computing to create the ultimate learning machine. Creating what others only imagined: the world's first code-deployable biological computer. Real neurons are grown directly on our custom chips, creating an intelligence that learns intuitively, with remarkable efficiency. Unlike traditional AI, our neural systems require minimal energy and training data to master complex tasks. This isn't just a new computer. It's computing, reimagined.

Even Jelly Can Remember

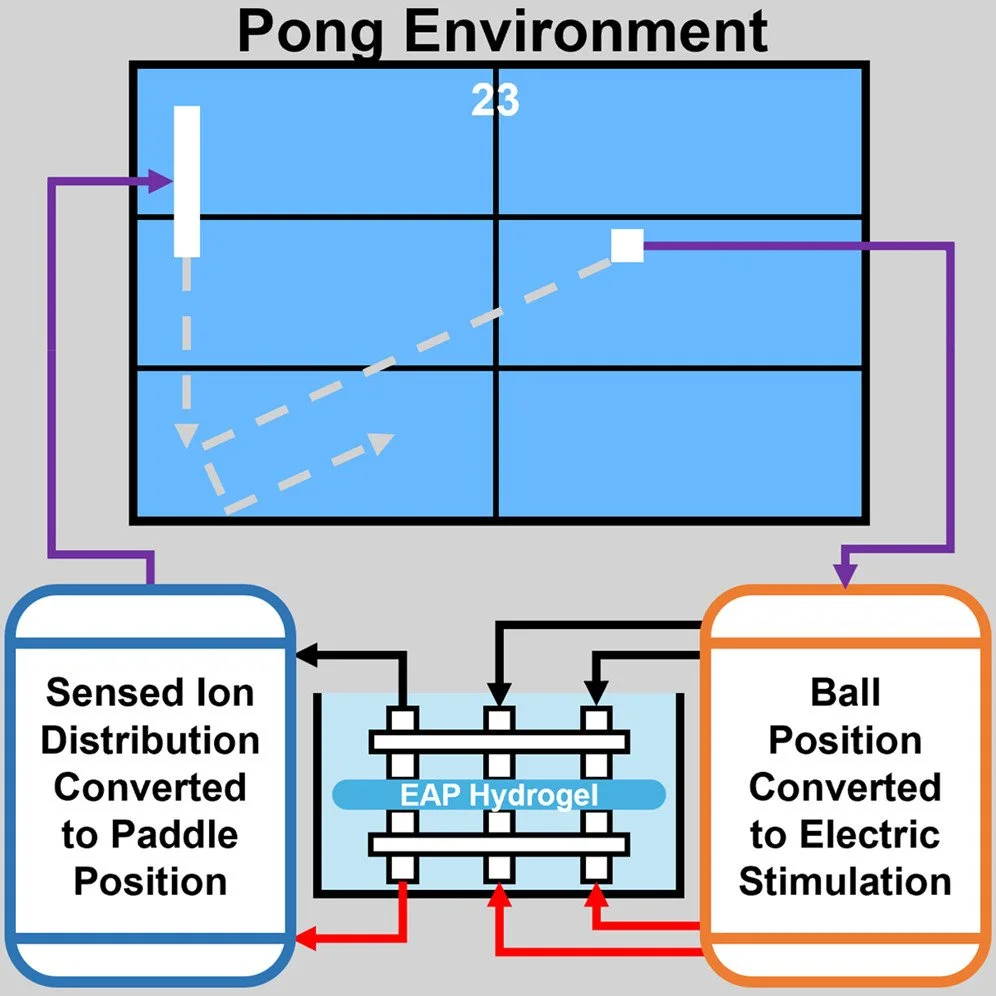

What makes the Cortical Labs achievement especially significant is not just the complexity of the task, but how it fits into a broader pattern. Consider that in August 2024, researchers at the University of Reading demonstrated that a simple polymer hydrogel — an electro-active “jelly” with no biological components whatsoever — could be interfaced with a game of Pong and show measurable improvement over time. Ions within the hydrogel’s polymer matrix moved in response to electrical stimulation, creating a form of emergent “memory” that resulted in longer rallies. The gel improved its accuracy by approximately 10 percent and reached peak performance in about 20 minutes.

An illustration of how the researchers set up the experiment, including the six, electrode-connected grid.

As lead researcher Yoshikatsu Hayashi put it, the basic principle operating in both neurons and hydrogels is the same: ion migration and distribution can function as a memory mechanism that correlates with sensory-motor feedback loops. The material was not designed for this purpose — the learning behavior was an emergent property of the ion dynamics when coupled to a structured environment.

Now place these findings along a continuum:

• A polymer hydrogel (no biology at all) demonstrates emergent memory through ion dynamics, learning to play Pong.

• A single-celled protist (Stentor coeruleus) demonstrates Pavlovian associative conditioning through intracellular signaling networks.

• Gene regulatory networks (computational models of real molecular circuits) show increased integrative causal emergence — becoming more agent-like — after associative training.

• 200,000 human neurons on a chip learn to navigate a complex 3D game environment in days.

The progression is not merely one of increasing complexity. It reveals something more fundamental: that the capacity for adaptive, goal-directed behavior — for learning, memory, and rudimentary agency — is not a threshold phenomenon that suddenly appears at some critical level of neural complexity. It is graded, distributed, and present across vastly different substrates, from synthetic polymers to unicellular organisms to cultured neural tissue.

Cognition as a Scale-Free Property: From Spacememory to Ionic Gels

This observation raises a profound possibility. If a simple ionic solution can spontaneously develop memory through the dynamics of charged particles in a polymer matrix, what does this suggest about more fundamental substrates? The principle that ion migration can encode information through spatial distribution patterns may point toward something deeper: that space itself, at quantum scales, might possess intrinsic memory properties through analogous mechanisms.

This connects to the emerging theoretical framework of spacememory — the hypothesis that the fabric of spacetime may retain information through quantum field dynamics in ways structurally similar to how ions encode memory in a hydrogel. If correct, biological memory systems might not be generating information ex nihilo, but rather accessing and interfacing with information already encoded in the quantum structure of space itself.

Under this view, neurons and even simple ionic gels could represent specialized systems for reading and writing to a more fundamental memory field — a possibility that reframes the hydrogel experiment not as a curiosity, but as evidence that memory may be a property distributed across scales, from synthetic polymers to biological tissue to spacetime itself.

This convergence of evidence is precisely what the Volitional Agent Criterion framework was developed to address. The VAC proposes that what distinguishes living from non-living systems — and what gives rise to “mind” in its most fundamental sense — is not any specific biological structure but the emergence of intrinsic agency: coherent, self-directed behavior arising from integrated internal organization that is substrate-independent.

A Stentor cell, a blob of neural tissue, a gene network, an ionic polymer — all are capable of exhibiting this fundamental property when sufficiently organized and coupled to an environment that allows learning. The neurons in Cortical Labs' system are not special because they are neurons. They are special because they represent one point on a vast continuum of systems capable of integrating information, maintaining learned state, and directing that learned state toward coherent goals.

In this framework, awareness is the root condition, and agency is its operative bridge into action. A gene regulatory network that becomes more causally integrated after conditioning is moving along this continuum. A Stentor cell that learns to associate two stimuli is exercising a primitive form of the same capacity. And 200,000 neurons on a chip that learn to navigate Doom are demonstrating it at a higher level of sophistication — but not a fundamentally different kind of phenomenon.

The uncomfortable question this raises for neuroscience is significant: if Pavlovian conditioning can occur in a single cell, and if emergent memory can arise in a glob of jelly, then what exactly are brains for? The answer, we suggest, is not that brains invent cognition from scratch, but that they scale, refine, and integrate cognitive capacities that already exist at more fundamental levels of organization. Synaptic networks did not create memory — they built upon memory mechanisms that were already operative in the molecular and subcellular architecture of their constituent cells and perhaps even from the quantum memory matrix dynamics of spacetime itself.

If memory is indeed a fundamental process rooted in the quantum geometrodynamic structure of spacetime — rather than a special circuit found only in neural tissue — then we would expect to find memory-like capacities distributed across scales of organization, from molecular networks to cells to organisms. And that is precisely what the evidence now shows.

Looking Forward

The demonstration that human neurons can learn to play Doom is a technical milestone, but its deeper significance lies in what it reveals about the nature of biological computation. These neurons were not programmed. They were not given instructions. They were coupled to an environment and allowed to self-organize through feedback — the same basic principle at work in a Stentor cell contracting in anticipation of a disturbance, or in a hydrogel’s ions redistributing to track a virtual ball.

The work from Cortical Labs, from Gershman’s Stentor experiments, from Levin’s GRN conditioning studies, and from the hydrogel research at Reading, together paint a picture that is becoming increasingly difficult to ignore: cognition is not a product of brains. It is a scale-free property of organized matter — a fundamental feature of the informational architecture of nature. Brains are extraordinary instruments for scaling this property to remarkable heights, but they are not where the story begins.

The story begins much deeper — perhaps as deep as the quantum fabric of spacetime itself. And the evidence for this is no longer merely theoretical. It is being demonstrated, experiment by experiment, in labs around the world.

References

1. Doan, N., Theroux, A., Ramdas, T., et al. (2026). Associative learning in the protozoan Stentor coeruleus. bioRxiv. doi: 10.64898/2026.02.25.708045

2. Pigozzi, F., Goldstein, A. & Levin, M. (2025). Associative conditioning in gene regulatory network models increases integrative causal emergence. Communications Biology, 8, 1027. doi: 10.1038/s42003-025-08411-2

3. Kagan, B.J. et al. (2022). In vitro neurons learn and exhibit sentience when embodied in a simulated game-world. Neuron, 110, 3952–3969. doi: 10.1016/j.neuron.2022.09.001

4. Strong, V., Holderbaum, W. & Hayashi, Y. (2024). Electro-Active Polymer Hydrogels Exhibit Emergent Memory When Embodied in a Simulated Game-Environment. Cell Reports Physical Science, 5, 102151. doi: 10.1016/j.xcrp.2024.102151

5. Pigozzi, F., Cirrito, T. & Levin, M. (2025). AI-Guided Resetting of Memories in Gene Regulatory Network Models. bioRxiv. doi: 10.1101/2025.09.10.675114

6. Brown, W.D. (2025). The Volitional Agent Criterion: Understanding How Agency and Awareness Define Living Systems Across Biological and Artificial Domains. doi: 10.1234/ISF.VA_Criterion2025

7. Brown, W.D. (2024). Memory Without Neurons: New Evidence of Learning in Single Cells. NovoSciences / International Space Federation

8. Brown, W.D. (2025). When Gene Networks Can Learn, “Mind” Stops Being a Brain-Only Concept. NovoSciences / International Space Federation.

9. M. Levin and D. B. Resnik, “Mind Everywhere: A Framework for Conceptualizing Goal-Directedness in Biology and Other Domains—Part Two,” Biol Theory, Feb. 2026, doi: 10.1007/s13752-025-00524-5.